Your support team handles 3,000 calls a month. Your product team gets a monthly summary based on whatever the support manager remembered, filtered through a spreadsheet of ticket categories defined two years ago. The categories are too broad to be actionable.

The actual language customers use, the specific frustrations, the moments where they say “I almost cancelled because of this,” never reach the people who can fix the problem.

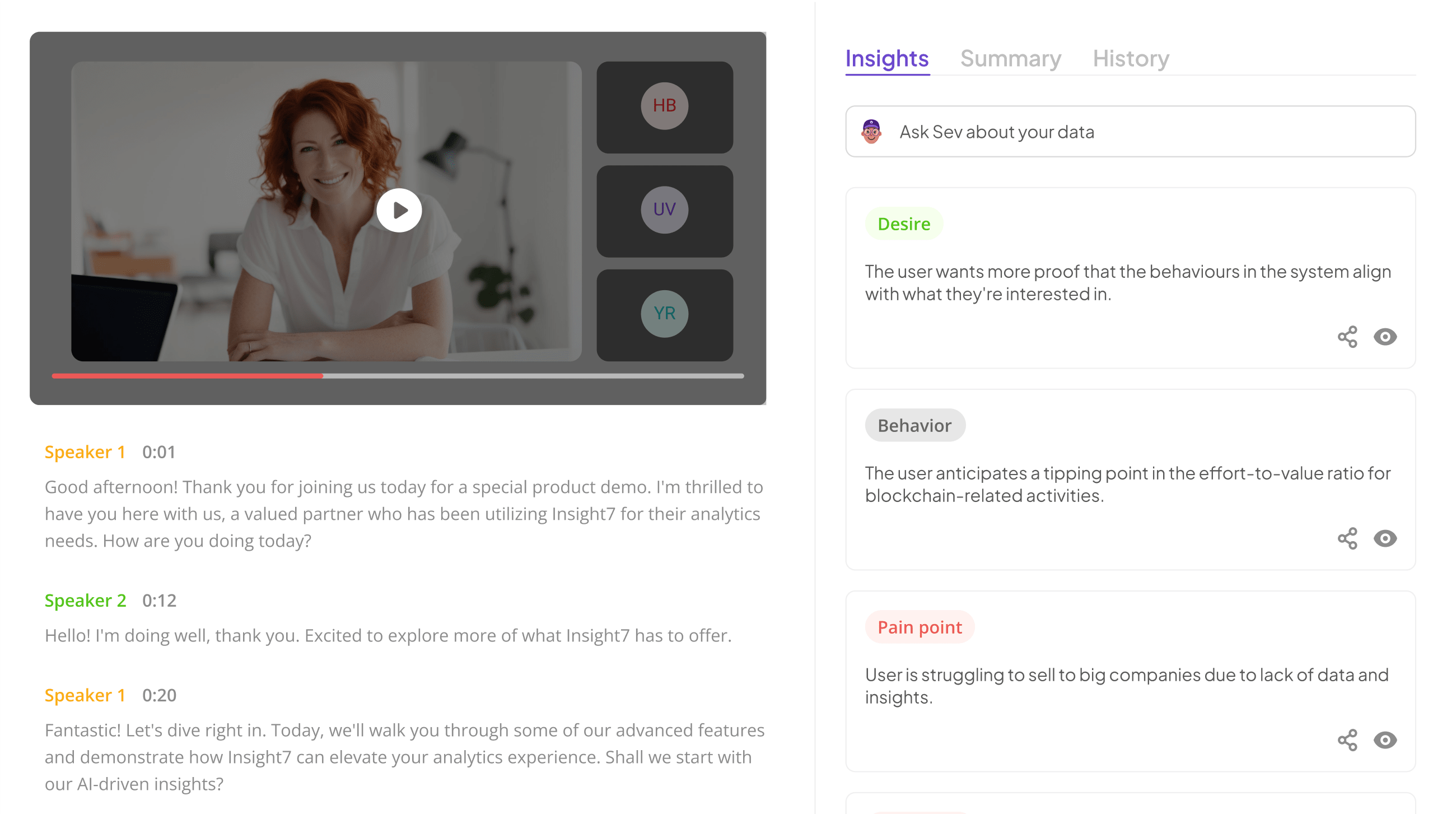

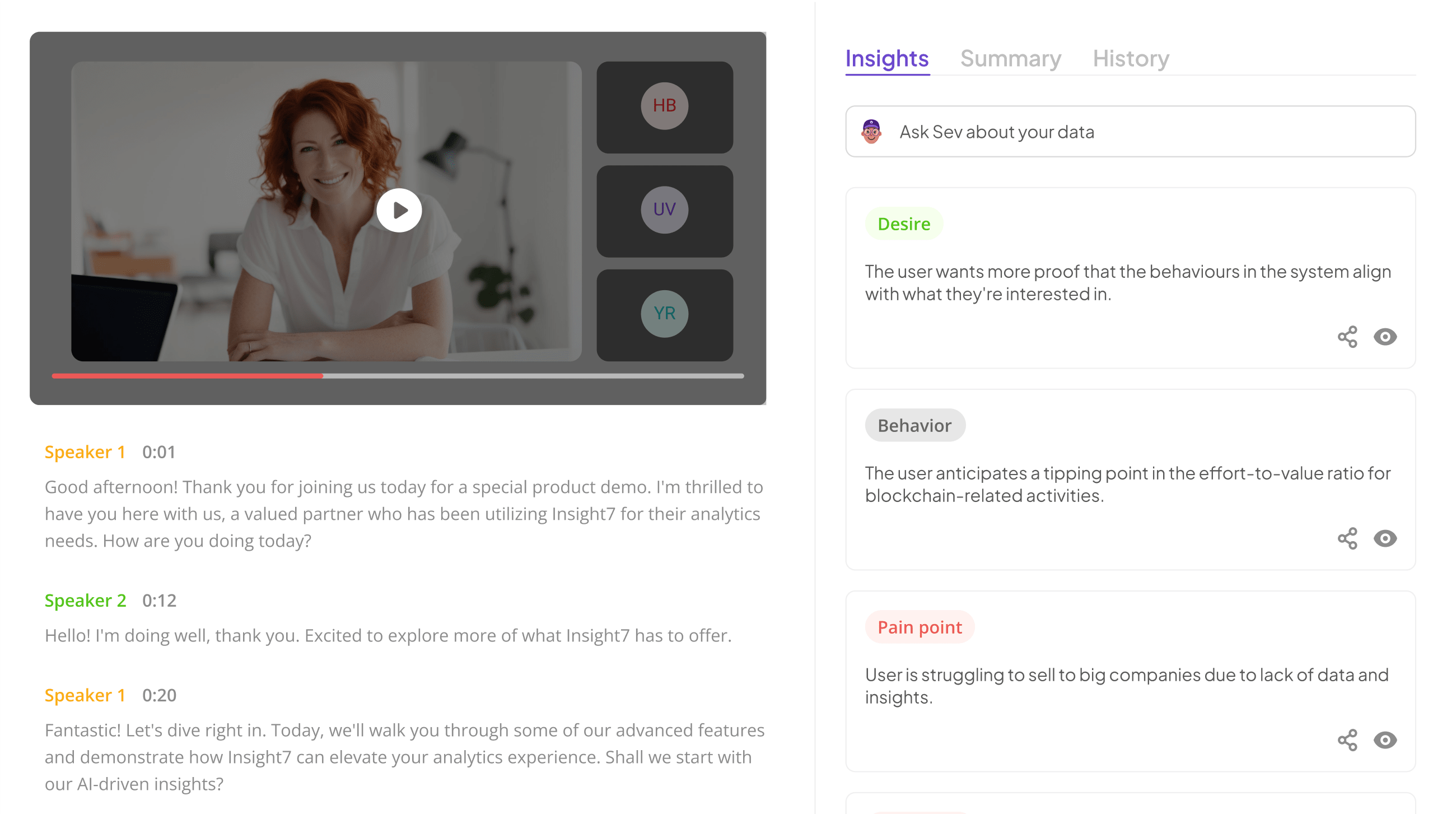

That is the gap between having call data and actually extracting product intelligence from it. The fastest way to identify pain points from calls with AI is to stop relying on samples and start analyzing every conversation automatically. Insight7’s call analytics platform does exactly that, scoring 100% of calls and surfacing recurring pain point themes with frequency data, sentiment context, and specific call evidence.

For mid-market companies with 40+ customer-facing reps generating thousands of interactions monthly, the difference between useful product intelligence and noise is whether you are analyzing every conversation or a manually curated sample.

Here is how this works in practice, where it outperforms manual methods, and where it still needs human judgment.

Why Manual Methods Fail to Identify Pain Points from Calls at Scale

Most support teams track pain points through some combination of ticket categorization, manager summaries, and occasional call listening sessions.

This approach works when call volume is low enough for a single person to stay close to the data. It breaks down predictably when three conditions converge.

First, call volume exceeds what any individual can review. A 60-rep support team generating 2,500 calls per month means even a dedicated analyst listening to 5% of calls hears 125 conversations and misses 2,375. The sample is not random, either. Analysts tend to review escalated calls, which biases the data toward extreme cases and misses the chronic mid-severity issues that drive quiet churn.

Second, ticket categories are too coarse for product decisions. “Billing issue” as a category does not tell the product team whether customers are confused by the invoice layout, frustrated by proration logic, or unable to find the payment portal. The specificity gap between what support categorizes and what product needs to act on is where most voice-of-customer programs lose signal.

Third, the feedback loop is too slow. By the time a quarterly support summary reaches the product roadmap meeting, the patterns are already stale. Customers who churned in January over a specific frustration are a data point in a March report that informs a June sprint. AI closes that loop by surfacing patterns as they emerge rather than after they have already caused damage.

How AI Extracts Pain Points from Call Data

AI-driven pain point identification works through three layers, each building on the previous one.

The first layer is transcription and structuring. Every call is transcribed and segmented into speaker turns. This converts unstructured audio into text that can be analyzed programmatically. Modern speech-to-text models handle accents, crosstalk, and industry terminology with high enough accuracy that the transcript is usable for pattern detection without manual correction on most calls.

The second layer is theme extraction. Natural language processing clusters similar customer statements across thousands of calls into recurring themes. Instead of relying on predefined ticket categories, the AI identifies themes from the actual language customers use. This surfaces pain points that existing categorization systems miss entirely because nobody thought to create a category for them. A theme like “customers expressing confusion about the difference between the two pricing tiers” emerges from pattern detection, not from someone deciding in advance to track it.

The third layer is frequency and severity scoring. Not all pain points carry equal weight. AI ranks themes by how often they appear across the call population and by the sentiment intensity associated with them. A pain point mentioned in 8% of calls with strong negative sentiment is a different priority than one mentioned in 25% of calls with mild frustration. Insight7’s analytics surfaces both frequency and sentiment data so product teams can prioritize based on impact rather than recency or loudness.

Evaluate Performance on Customer Calls for Quality Assurance.

Where This Creates Product Intelligence That Manual Methods Cannot

The specific advantage of analyzing every call rather than a sample is statistical validity. When a product manager sees that 34% of support calls in the last 30 days mention difficulty with the onboarding wizard, that is a prioritization signal backed by hundreds of data points. When the same insight comes from a support manager saying, “I’ve been hearing a lot about onboarding lately,” the product team has no way to gauge whether “a lot” means 5% of calls or 50%.

The second advantage is speed. Patterns surface in days rather than quarters. A new pain point that emerges after a product update can be detected within the first week of calls rather than appearing in the next quarterly review. This enables product teams to ship fixes while the issue is still contained rather than after it has compounded into a churn driver.

The third advantage is granularity. AI can differentiate between related but distinct issues that manual categorization lumps together. “Customers confused by pricing” becomes three separate themes: customers who cannot find the pricing page, customers who find it but do not understand the tier differences, and customers who understand the tiers but feel the price-to-value ratio is wrong. Each of those requires a different response from the product team.

Where Human Judgment Still Matters

AI surfaces patterns. Humans decide what to do about them. Two areas where judgment remains essential:

Severity assessment requires a business context. AI can tell you that 15% of calls mention a specific feature gap. It cannot tell you whether that gap is a strategic priority or an edge case that affects a segment you are intentionally not serving. The product manager’s role is to evaluate AI-surfaced pain points against the product strategy, not to accept every high-frequency theme as a roadmap item.

Root cause analysis often requires cross-functional investigation. AI can surface that customers are confused by a specific workflow. It cannot always determine whether the confusion stems from poor UX design, insufficient documentation, a sales team setting wrong expectations, or a genuine product limitation. That determination requires collaboration between product, support, and sometimes sales.

The most effective operating model uses AI to generate the signal and humans to determine the response. Insight7’s knowledge base captures these patterns as a living resource that product, support, and leadership teams can reference continuously rather than waiting for a periodic report.

Building a Feedback Loop That Actually Reaches the Product Team

Surfacing pain points is only valuable if the insight reaches someone who can act on it. Most voice-of-customer programs fail not at the identification stage but at the handoff. Three practices make the handoff work:

Shared dashboards, not forwarded reports. Product teams should have direct access to the same pain point data that the support team sees. When product managers can browse call themes, filter by time period, and drill into specific call examples, they build their own understanding rather than relying on someone else’s interpretation.

Frequency thresholds that trigger review. Instead of reviewing all pain points quarterly, set a threshold (for example, any theme appearing in more than 10% of calls within a 30-day window automatically enters the product triage queue). This creates a structured cadence without requiring manual escalation.

Closed-loop tracking. When a product fix ships, the team should monitor whether the corresponding pain point theme decreases in subsequent call data. This validates that the fix worked and creates an accountability mechanism that connects product decisions to customer outcomes.

If your product team is making roadmap decisions based on ticket categories and quarterly summaries rather than real-time call pattern data, book a demo with Insight7 to see how automated pain point detection across 100% of calls changes what your product team knows and how fast they know it.

Analyze & Evaluate Calls. At Scale.

Frequently Asked Questions

1. How does AI identify pain points from customer calls?

AI transcribes calls, then uses natural language processing to cluster similar customer statements into recurring themes across the full call population. Themes are ranked by frequency and sentiment intensity, surfacing the most common and most emotionally charged product issues without requiring manual review.

2. Is AI pain point detection more accurate than manual analysis?

It is more consistent and more complete. AI analyzes every call, eliminating the sampling bias inherent in manual review. It does not replace human judgment on severity or strategic relevance, but it ensures the patterns that reach decision-makers are statistically representative rather than anecdotally selected.

3. What types of pain points can AI detect from calls?

AI detects product usability issues, feature gaps, pricing confusion, onboarding friction, documentation failures, and service quality problems. It also identifies themes that do not fit existing ticket categories, which is often where the most actionable insights hide.

4. How quickly can AI surface new pain point trends?

Patterns typically surface within days of a trend emerging, compared to weeks or months with manual reporting cycles. This is particularly valuable after product releases or pricing changes, when new issues appear rapidly, and early detection prevents compounding churn.

5. What size team benefits from AI-driven pain point analysis?

Teams with 40+ customer-facing reps generating hundreds or thousands of calls monthly see the clearest ROI. Below that threshold, call volume may not produce enough data density for statistical pattern detection. Above it, manual analysis becomes functionally impossible at the granularity product teams need.

Analyze & Evaluate Calls. At Scale.