A sales operations lead spends six hours every Monday building a pipeline report for the Thursday leadership meeting. The report summarizes 400 calls from the previous week, highlights deal risks, surfaces objection patterns, and flags reps who need coaching attention. By Thursday, the data is already four days stale. By the time leadership acts on it, the patterns have shifted.

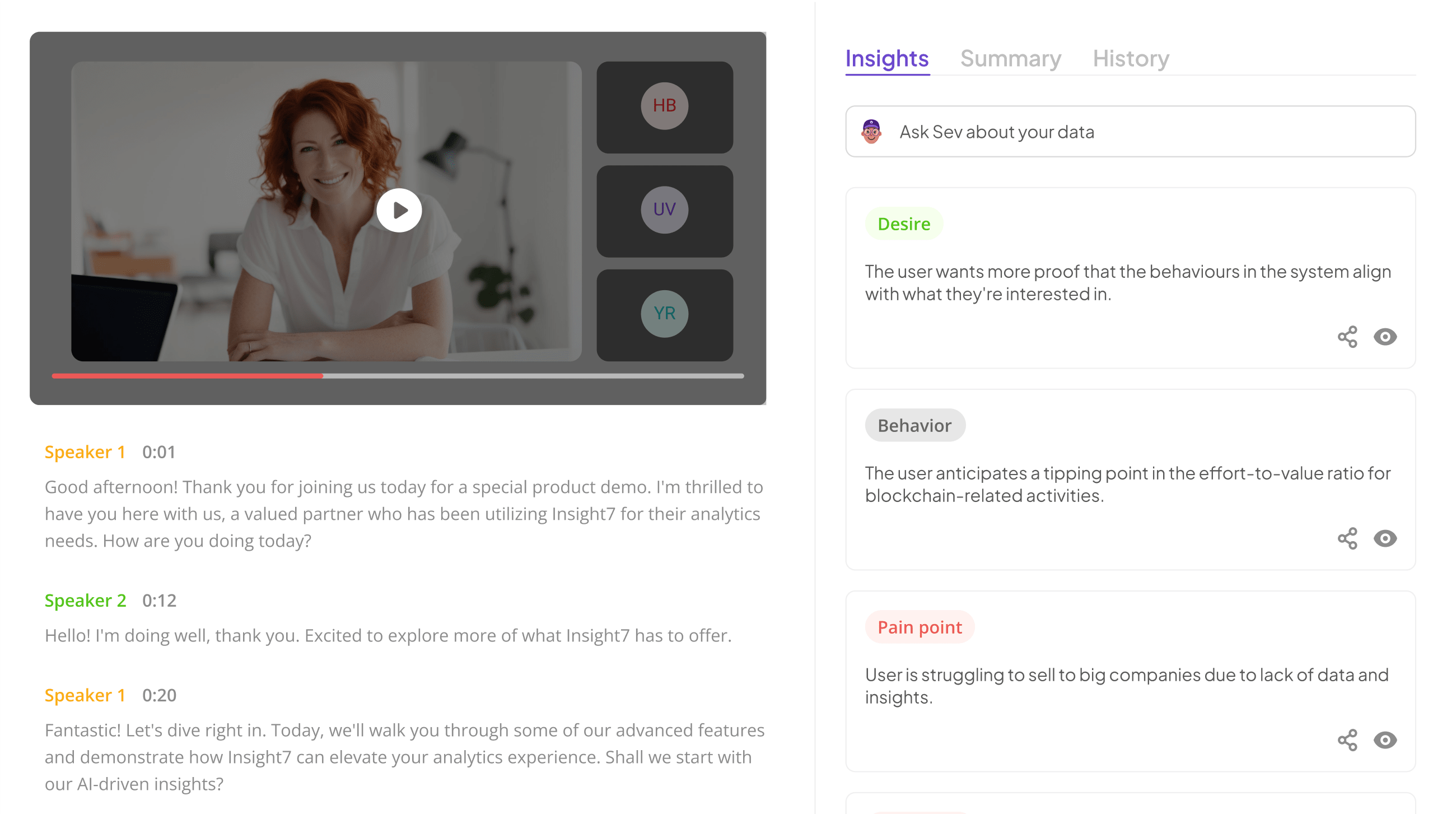

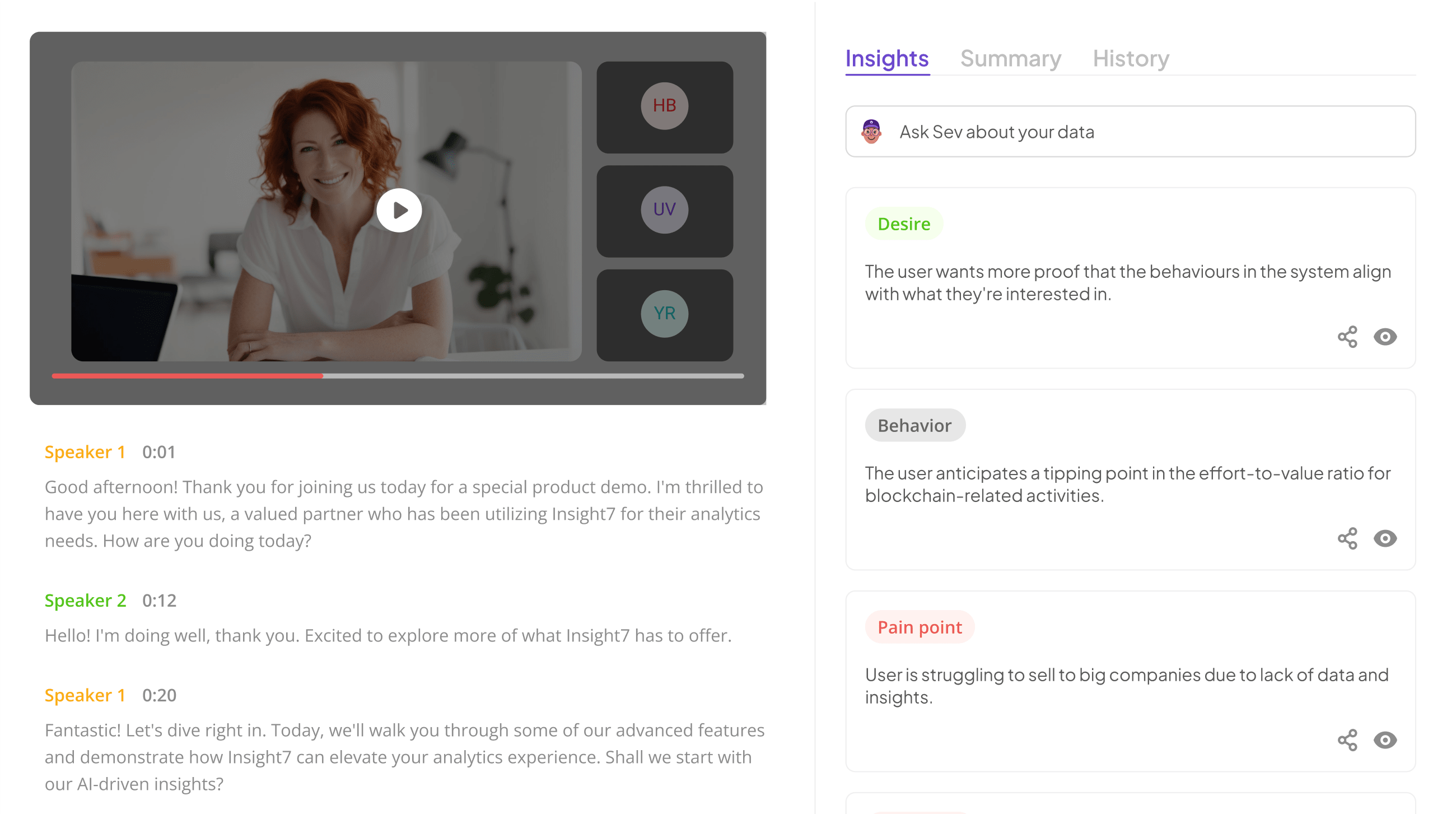

This is where it actually makes sense to use AI to write reports. Not for the abstract task of drafting documents, but for the specific operational problem of converting high-volume conversation data into structured reports fast enough to be actionable. Insight7’s call analytics platform generates automated QA scorecards, pipeline reports, and conversation trend analyses from 100% of calls, producing the same outputs a sales ops lead builds manually, but in hours rather than days. For mid-market sales and contact center teams with 40+ reps, the question is not whether to use AI to write reports. It is which reports to automate first, and where human judgment still matters.

Here is a practical guide to AI-generated reporting for sales, QA, and customer support teams, with the tools that actually produce usable output and the places where automation creates more problems than it solves.

Why Generic AI Report Writing Tools Fail for Call Data

Most guides on how to use AI to write reports recommend ChatGPT or Microsoft Copilot. These tools work well for drafting prose from structured inputs. They do not work well for the reporting problem that most sales and contact center teams actually face.

The problem with generic AI writing tools for call data: they need the data to be structured before the reporting happens. ChatGPT can summarize a meeting transcript if you paste it in. It cannot ingest 400 call recordings, score them against a custom QA rubric, cluster themes across the population, and generate a report with evidence-linked examples. That requires purpose-built call analytics that combine transcription, scoring, theme extraction, and reporting in one workflow.

The second problem: generic tools produce generic output. A ChatGPT-generated sales report reads like a ChatGPT-generated sales report. It summarizes what you fed it without the operational context that makes a report useful, such as which deals are at risk, which reps deviate from top performer patterns, or which objections are trending up this week.

The third problem: no audit trail. When a pipeline report influences a deal review or a compliance decision, the report needs to link back to the specific call evidence that produced each insight. Generic AI tools do not preserve that lineage.

Extract insights from interviews, calls, surveys

and reviews for insights in minutes

Which Reports Make Sense to Automate with AI

Not every report benefits from automation. The reports where AI delivers real value share three characteristics: they are generated on a repeating cadence, they pull from a large population of source data, and the analytical patterns are consistent enough to codify.

QA scorecards per rep. Scoring 100% of calls against behavioral criteria produces rep-level scorecards that show criterion-specific performance over time. Manual QA reviewers can score 5% of calls. AI scores everything, which means the scorecard reflects the rep’s actual performance pattern rather than a sample. Insight7’s QA engine generates these automatically with evidence links to the specific call moments that produced each score.

Objection and theme tracking reports. When a sales leader needs to know which objections are trending up, manual review of 40 calls out of 400 provides a sample too small to detect meaningful shifts. AI theme extraction across the full call population surfaces frequency data that is statistically valid, identifying pattern changes within days rather than quarters.

Compliance monitoring reports. In financial services and healthcare, required disclosures must be delivered on every call. Automated scoring flags missed or incomplete disclosures across 100% of calls and classifies them by severity tier. Manual compliance review at 3% coverage catches a fraction of violations and creates regulatory exposure.

Coaching effectiveness reports. L&D teams need to know whether a training program changed behavior on calls. Pre-and post-scores on the specific behavioral criteria the training targeted, pulled automatically from call data, answer that question directly. Without automation, the L&D team is guessing based on surveys.

Conversation trend reports for product and marketing. Product managers want to know what customers are actually asking about this quarter. Automated theme extraction across all customer calls delivers frequency data and representative quotes without requiring a dedicated analyst to listen to recordings.

Which Reports Still Need Human Judgment

AI generates the data. Humans still make several calls that automation cannot.

Severity and strategic relevance. AI can tell you that 22% of calls mention a specific feature gap. It cannot tell you whether that feature is a strategic priority, an edge case for a segment you are intentionally not serving, or a misinterpretation of an existing feature. Product leaders evaluate the AI-surfaced patterns against the company’s strategy.

Deal-specific judgment calls. Pipeline reports can flag deals as at-risk based on conversation signals. Whether to intervene, at what level, and with what message requires the deal owner’s context about the account, the buyer’s personal circumstances, and the competitive landscape.

Cross-functional root cause analysis. AI can surface that customers are confused by a specific workflow. Determining whether the confusion stems from UX design, documentation, sales expectations, or genuine product limitations requires cross-functional investigation. AI produces the signal that triggers the investigation.

How to Structure an AI-Generated Report That Leadership Trusts

Reports generated by AI need three elements to earn executive trust: structured findings tied to evidence, a clear distinction between observation and recommendation, and a consistent format that enables comparison across periods.

Structured findings with evidence links. Every claim in the report should link back to the source data that supports it. “Objection frequency on pricing increased 34% week-over-week” should be clickable to the specific calls that produced the number. Without that lineage, executives treat AI reports as black boxes and discount their authority.

Separate observation from recommendation. AI can reliably surface what is happening. It is less reliable at determining what to do about it. Reports should cleanly separate “here is what the data shows” from “here is what we recommend,” and the recommendations should come from the human owner of the report, not the AI. This keeps accountability clear.

Consistent format across periods. Reports that change structure week-over-week force readers to re-orient every time. Lock the format, measure the same metrics the same way, and let the content shift based on what the data shows.

Analyze & Evaluate Calls. At Scale.

AI Tools That Actually Work for Call-Based Reporting

The right tool depends on what you are reporting on and where the source data lives.

1. Insight7

Insight7 is built for teams that need to generate QA scorecards, objection trackers, close rate drivers, and compliance reports from call data. It handles transcription, scoring, theme extraction, and reporting in one workflow. Built for mid-market sales and contact center teams with 40+ reps. The trade-off: Insight7 is a specialized tool for conversation intelligence reporting, not a general-purpose document writer.

2. Microsoft Copilot

Microsoft Copilot works well for teams that already have structured data in Excel or Word and want to accelerate drafting. It cannot ingest raw call audio or generate scored reports from call data. Useful as a complement to a specialized call analytics tool, not a replacement.

3. ChatGPT

ChatGPT is flexible for ad-hoc report drafting when you already have structured inputs. It requires clear prompts, careful fact-checking, and human review of every output. Not suitable for reports that need audit trails or evidence links to source data.

For sales ops leads, QA managers, and L&D leaders generating reports from call data on a repeating cadence, the specialized path usually wins. Generic tools require too much manual preprocessing to be efficient at scale.

If your team spends hours each week manually building reports from call data that a specialized tool could generate automatically, book a demo with Insight7 to see how automated scoring and reporting across 100% of calls changes what your operating cadence looks like.

Frequently Asked Questions

1. What types of reports can AI write best?

AI performs best on reports generated on a repeating cadence from large datasets with consistent analytical patterns. QA scorecards, compliance monitoring, objection tracking, and conversation trend reports are strong fits. One-off strategic analyses and reports requiring heavy cross-functional judgment are weaker fits.

2. Is ChatGPT good for writing business reports?

ChatGPT works for drafting prose from structured inputs you provide. It is not suitable for generating reports from raw data sources like call recordings, and it does not preserve evidence links to source material. Use it as a drafting assistant, not as a reporting engine.

3. How do I use AI to write reports from call data?

Use a call analytics platform that combines transcription, scoring, and reporting in one workflow. The AI processes the calls, scores them against defined criteria, extracts themes, and generates reports automatically. Generic writing tools cannot handle the transcription and scoring layer that call reporting requires.

4. How long does it take AI to generate a sales report?

Specialized call analytics platforms generate standard reports in minutes rather than hours. Complex custom reports with extensive configuration may take longer on first setup, but subsequent reports run automatically on a defined cadence without manual effort.

Can AI reports replace manual reporting entirely?

AI replaces the manual work of data collection, scoring, and draft generation. Human judgment remains essential for severity assessment, strategic interpretation, and recommendations. The most effective operating model uses AI to produce the observations and humans to determine the response.

Analyze & Evaluate Calls. At Scale.