An L&D manager at a 120-rep sales organization just wrapped a six-week objection handling training program. She needs to write a summary report for the VP of Sales and CFO. They control next year’s training budget. Her report will land in a 45-minute quarterly review alongside seven other reports competing for the same attention and the same budget line.

The difference between a training summary report that earns continued investment and one that gets skimmed and forgotten is not length, polish, or storytelling. It is whether the report connects training activity to measurable behavioral change on calls and measurable business outcomes.

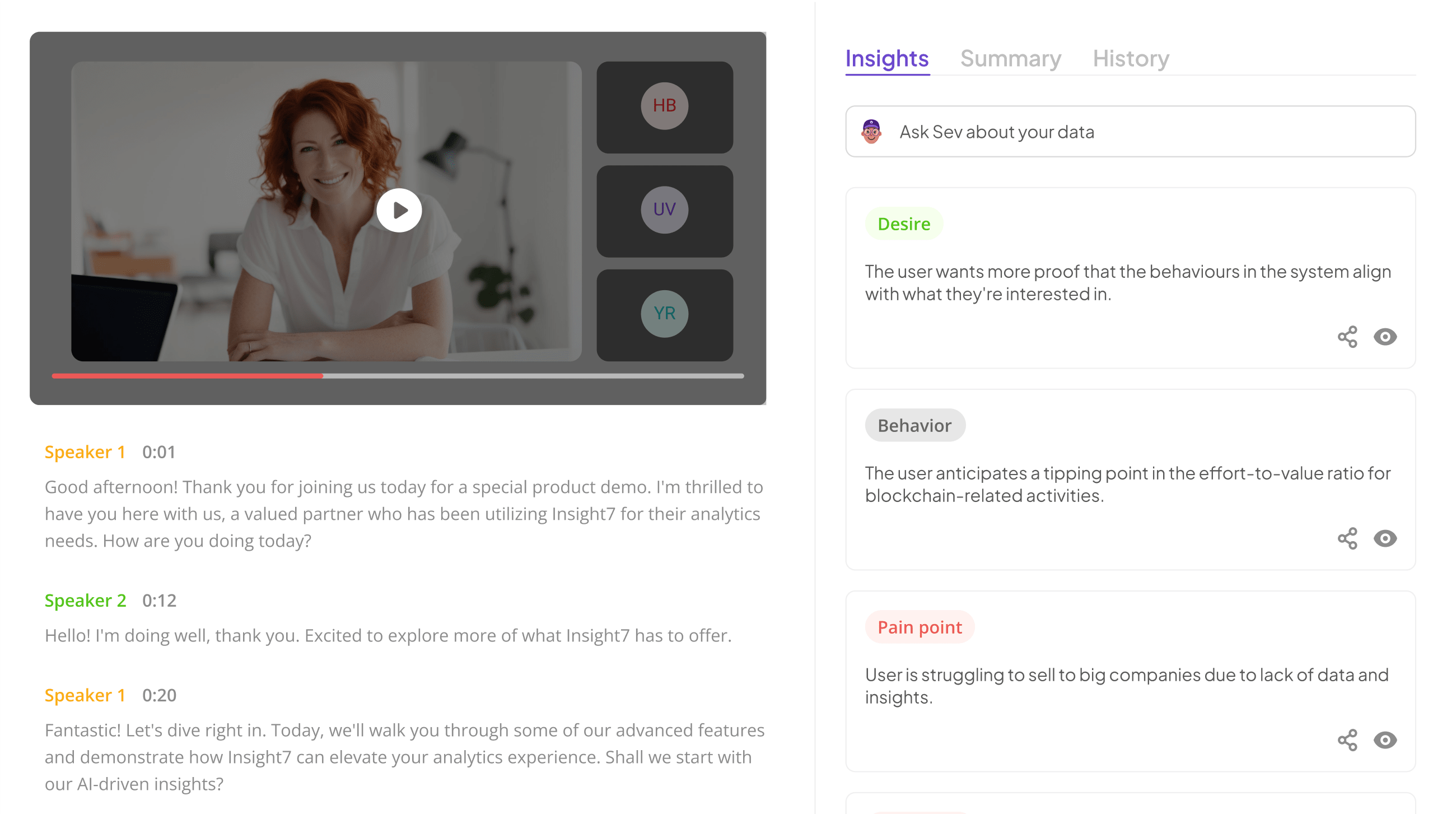

Insight7’s coaching analytics generates this connection automatically by scoring 100% of calls against the specific behaviors each training program targets, producing pre-and-post criterion scores, cohort pass rates, and trajectory data that a manual report cannot match. For sales and contact center L&D leaders with 40+ reps, the reporting problem is not knowing what to say.

It is having evidence strong enough to say it convincingly.

Here is how to structure a training summary report that survives the executive review, and where the evidence actually comes from.

What Executives Actually Want From a Training Summary Report

Before writing a single section, understand the decision the report needs to inform. An executive reading a training summary report is not asking “did training happen?” They are asking three questions in this order:

Did the targeted behaviors change in actual customer-facing work? If objection handling training was delivered, did objection handling scores improve on real calls? If compliance training was delivered, did disclosure pass rates go up? Generic improvement metrics like “participant satisfaction: 4.6 out of 5” do not answer this question.

Did the behavior change produce a business outcome? Improved objection handling scores only matter if they correlate with higher close rates, shorter sales cycles, or reduced discount depth. Improved empathy scores only matter if they correlate with higher CSAT, lower escalation rates, or better retention.

Should we continue, expand, or cut this program? Every training summary report implicitly ends with a budget recommendation. Making that recommendation explicit and backing it with criterion-level data is what separates reports that get approved from reports that get deferred.

The Structure That Works for Sales and Contact Center Training Reports

Most training summary report templates treat every program the same. That breaks down quickly for sales and contact center L&D because the evidence sources are different and the stakes are different. The structure below is built for programs where the output is behavioral change in customer-facing conversations.

Section 1: Program context in three sentences. What was the training, who attended, and what behaviors were targeted? No longer. Executives know their own organization. They do not need four paragraphs of context.

Section 2: Behavioral change evidence. Pre-training scores on the target behaviors. Post-training scores on the same behaviors. Delta is expressed as both percentage points and relative improvement. This is the most important section of the report. If the scores did not move, the program did not work, and the rest of the report is cleanup.

Section 3: Business outcome correlation. Did the behavior changes correlate with meaningful business metrics? Close rates, CSAT, handle time, escalation rates, and compliance pass rates. Be honest about what the data shows and what it does not. Spurious correlations presented as causation destroy credibility.

Section 4: Cohort distribution. Aggregate scores hide the range. Report the distribution: how many reps hit the proficiency threshold, how many improved but not to proficiency, how many did not improve. Executives care about this because it reveals whether the program works for everyone or only for the reps who were already close.

Section 5: Recommendation with rationale. Continue, expand, modify, or cut. The recommendation should flow logically from the evidence in sections 2 through 4. No hedging.

Section 6: Appendix with methodology. How the scores were produced, which calls were included, and what the scoring criteria were. This section is for the skeptical reader who wants to verify the evidence. Most executives will not read it. The ones who do are the ones whose opinion matters most.

Extract insights from interviews, calls, surveys

and reviews for insights in minutes

Where Most Training Summary Reports Go Wrong

Three failure modes account for almost every training report that fails to land with leadership.

- Activity reporting instead of outcome reporting.

Reports that lead with “42 hours of training delivered, 96% attendance, 4.6 participant satisfaction” are answering the wrong question. Activity metrics validate that training happened. Outcome metrics validate that training worked. Executives only care about the second.

- Generic behavioral metrics.

“Communication skills improved 18%” does not mean anything. Communication skills are not a measurable behavior. “Average time before first open-ended question decreased from 3:12 to 1:48” is measurable. “Acknowledgment of customer concern before problem-solving increased from 34% to 71% of calls” is measurable. The more specific the behavior, the more credible the report.

- Missing the connection to business outcomes.

A report showing behavior change without business impact raises an uncomfortable question: so what? The link to outcomes does not have to be causal, but it should exist. Insight7’s call analytics automatically correlates behavior scores with outcomes like close rate, CSAT, and escalation rate, eliminating the manual work of pulling disparate data sources together.

What the Evidence Actually Looks Like

A well-structured training summary report for a sales objection handling program might look like this in the behavioral change section:

Pre-training (4 weeks before program start): Average objection handling score across the cohort of 32 reps was 51%. 6 reps scored above the 70% proficiency threshold. 14 reps scored below 40%.

Post-training (4 weeks after program completion): Average objection handling score rose to 68%. 18 reps scored above the 70% proficiency threshold, up from 6. The bottom cohort (below 40%) dropped from 14 reps to 3.

Business outcome correlation: During the same post-training window, the cohort’s close rate on deals where pricing objections surfaced rose from 22% to 29%. Average discount depth on closed deals decreased by 4 percentage points. The 14 reps who moved above the proficiency threshold showed the largest close rate improvement.

That is what evidence-backed reporting looks like. It is specific, measurable, tied to real work, and defensible under scrutiny.

Why This Kind of Report Was Previously Impossible at Scale

The reason most L&D teams produce activity reports rather than outcome reports is not laziness. It is that the evidence for outcome reports historically required manually reviewing hundreds of calls to score them against the target behaviors. A 32-rep cohort generating 40 calls per rep per month produces 1,280 calls monthly. Manually scoring even 10% of that to build a pre- and post-behavioral assessment is a full-time job.

Automated call scoring across 100% of calls removes the manual bottleneck. The L&D team defines the behavioral criteria that map to the training program, the platform scores every call against those criteria automatically, and the training summary report pulls directly from the scoring data. A report that used to take two weeks of manual work now takes an hour to review and approve.

If your L&D team is writing training reports based on survey scores and attendance data because pulling real behavioral evidence is too labor-intensive, book a demo with Insight7 to see how automated scoring changes what your training summary reports are capable of proving.

Frequently Asked Questions

1. What should a training summary report include?

A training summary report should include program context, pre- and post-scores on the specific behaviors the training targeted, correlation with business outcomes, cohort distribution, and a clear recommendation. Activity metrics like attendance and satisfaction are supplementary, not central.

2. How long should a training summary report be?

Three to five pages for the main report, plus a methodology appendix. Executives will not read longer reports unless the first page makes them want to. Focus on behavioral change evidence and business outcome correlation; cut everything else ruthlessly.

3. What metrics should a training summary report track?

Behavioral metrics specific to the training program (not generic “skills improved” claims), business outcome metrics that plausibly connect to the behavior changes, and cohort distribution data showing how results varied across the group. Avoid vanity metrics that do not inform a decision.

4. How do you prove training changed behavior on calls?

Score calls against the specific behaviors the training targeted before and after the program. Automated QA scoring platforms make this feasible across large call populations. Manual scoring works on a smaller scale but limits statistical confidence.

5. How often should training summary reports be delivered?

At the conclusion of each program, plus quarterly rollups for leadership review. Programs running longer than a quarter should include mid-program checkpoints, particularly if the program is new or represents a significant budget.

Analyze & Evaluate Calls. At Scale.