You run a 60-rep contact center. Your FCR hovers around 68%, CSAT sits at 76%, and your QA team manually reviews maybe 3% of calls per month. Your CEO just asked how those numbers compare to the rest of your industry, and you are not sure whether to feel confident or concerned.

That is the exact scenario where call center KPI benchmarks by industry stop being an academic exercise and start driving real decisions.

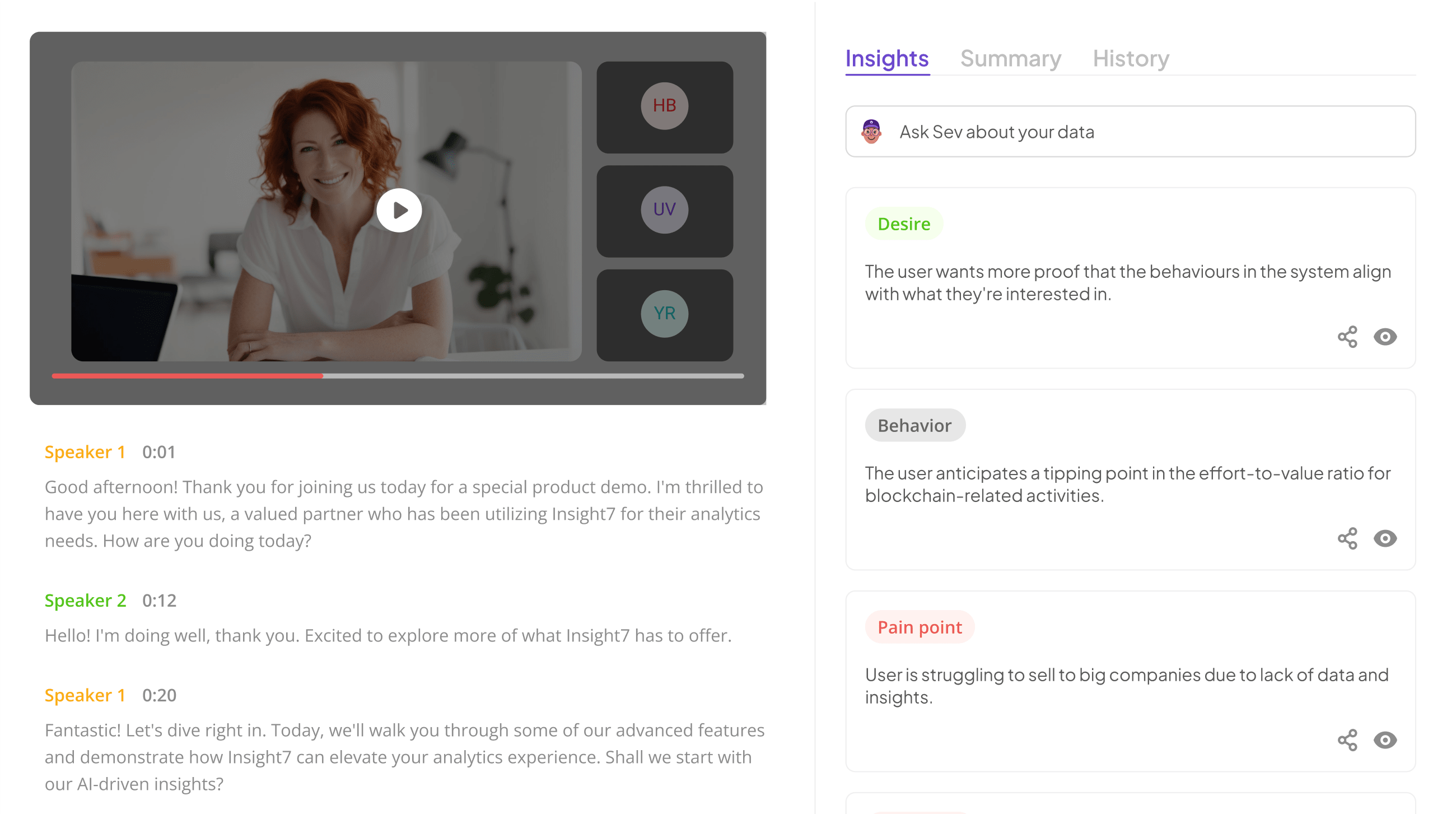

Insight7’s automated call analytics and QA platform scores 100% of calls against custom QA frameworks, giving mid-market contact centers the data density to benchmark accurately rather than guess from a 3% sample.

Without that coverage, most benchmarks are built on incomplete data, which means the targets you set and the coaching you deliver are based on a fragment of what is actually happening on your calls.

Here is what the numbers actually look like across five major verticals, what they mean for your operation, and where the real gaps tend to hide.

Healthcare: Compliance and Speed Are Non-Negotiable

Healthcare contact centers handle appointment scheduling, billing, insurance verification, and urgent clinical triage. Patients call in high-stress moments, and every mishandled interaction is a compliance risk and a retention problem.

According to the Talkdesk 2025 KPI Benchmarking Report, healthcare contact centers operate under tighter service-level expectations than most verticals. The benchmarks that matter most here:

FCR targets sit between 75% and 85%. Patients who have to call back about the same billing question or appointment change are not just frustrated; they erode trust in the provider. CSAT expectations run 85% to 90% or higher, driven by the emotional weight of healthcare interactions.

Average speed of answer needs to stay under 30 seconds, particularly for lines handling urgent clinical questions. Call abandonment should remain below 4%, because in healthcare, an abandoned call can mean a missed appointment or a delayed care decision.

The operational challenge is that HIPAA compliance, identity verification, and EHR lookups add friction to every interaction. Teams that rely on manual QA sampling miss compliance violations on the vast majority of calls they never review. Automated QA scoring across 100% of healthcare calls catches disclosure gaps, empathy failures, and verification shortcuts that a 2% manual sample will never surface.

Financial Services: Trust Is Built on Accuracy

Financial services contact centers handle account inquiries, fraud alerts, loan processing, and regulatory disclosures. A single compliance miss can trigger regulatory action, and a single trust-breaking interaction can lose a customer worth years of revenue.

The benchmarks reflect that weight. FCR ranges from 75% to 85%, but the real differentiator is the quality of resolution, not just whether the call was technically closed. CSAT targets run 80% to 90%.

Agent QA scores need to hit 90% or above to ensure compliance with disclosure requirements, verification protocols, and regulatory scripts. AHT runs 6 to 10 minutes, longer than most verticals because verification and compliance steps add necessary time.

The gap most financial services teams miss is between their QA score and their actual compliance exposure.

If your QA program reviews 5% of calls and scores 92%, you have no idea what is happening on the other 95%. According to research cited by ICMI, a significant share of contact centers do not monitor voice calls with regularity, and those that do typically evaluate only 1% to 2% of total interactions. For regulated industries, that is not a benchmarking problem. It is a risk management failure.

Insight7 scores every call against custom compliance and QA frameworks built for financial services, flagging disclosure misses, verification gaps, and script deviations automatically. That turns your QA score from a sample-based estimate into a census-level measurement.

Evaluate Performance on Customer Calls for Quality Assurance.

E-Commerce: Speed and Conversion Are the Scoreboard

E-commerce contact centers handle order inquiries, returns, product questions, and increasingly, pre-purchase sales conversations. Volume spikes around promotions and holidays make staffing and service-level management a constant challenge.

FCR benchmarks land between 70% and 80%. CSAT runs 80% to 88%, with a direct line to repeat purchase rates and review scores.

AHT should stay between 5 and 8 minutes, reflecting the transactional nature of most e-commerce calls. Average response time for chat needs to stay under 2 minutes, and email under 24 hours.

The undertracked metric in e-commerce is conversion rate on inbound sales calls. Many e-commerce teams treat their contact center as a cost center when a meaningful percentage of inbound calls are purchase-intent interactions.

Teams that track and coach on conversion rate alongside CSAT often find that better call handling drives both metrics simultaneously, because a rep who genuinely solves a pre-purchase objection both converts and satisfies.

Sales Contact Centers: Revenue Per Conversation

Sales contact centers, both inbound and outbound, are measured on pipeline contribution. The benchmarks look different because the outcome is revenue, not resolution.

Conversion rates vary widely: 5% to 15% for inbound qualified leads, 1% to 5% for outbound cold calls. The more useful benchmarks are lead-to-opportunity rate and opportunity-to-close rate, which reveal where in the funnel your team loses momentum.

AHT is less relevant here than average call duration by outcome, because a 12-minute call that closes is worth more than a 4-minute call that does not.

The coaching gap in sales contact centers is specific. Most sales QA programs score on generic behaviors (did the rep ask discovery questions, did they handle objections) without tying those behaviors to outcomes. Insight7’s AI coaching workflows connect call-level behavioral data to conversion outcomes, so coaching sessions focus on the specific patterns that separate closers from the rest of the team.

Tech Support: Resolution Quality Over Handle Time

Tech support contact centers handle troubleshooting, product questions, and escalation management. AHT benchmarks run 10 to 15+ minutes because complex technical issues legitimately take longer to resolve.

Forcing AHT down in tech support almost always increases repeat contact rate.

FCR targets land between 70% and 79%. CSAT runs 78% to 85%. The metric that matters most here is repeat contact rate for the same issue, which should stay below 15%. A low repeat rate means issues are genuinely resolved, not just triaged.

The coaching opportunity in tech support is knowledge base utilization and escalation accuracy. Teams that track which agents consistently resolve issues that others escalate can identify both training gaps and knowledge base gaps simultaneously.

How to Actually Use These Benchmarks

Knowing the numbers is the easy part. The hard part is turning them into operational changes. Three principles make benchmarks useful rather than decorative:

Benchmark against your own trajectory first. Industry averages are directional. Your four-to-six-quarter trend line is diagnostic. If your FCR improved from 65% to 72% in two quarters, that momentum matters more than whether the industry average is 75%.

Benchmark by segment, not in aggregate. Your FCR on billing calls and your FCR on technical escalations are two completely different numbers driven by different root causes. Blending them into a single metric hides the signal.

Connect benchmarks to coaching. A benchmark that does not change anyone’s behavior is a vanity metric. The path from “our CSAT is 77%” to “our CSAT is 83%” runs through specific call-level coaching on specific behaviors. That requires reviewing more than a handful of calls per rep per month and tying coaching directly to the patterns that appear in the data.

If your team has outgrown manual QA sampling and you need benchmarks built on 100% of your calls rather than a 2% guess, book a demo with Insight7 to see how automated scoring and coaching workflows close the gap between knowing your numbers and actually moving them.

Frequently Asked Questions

1. What is a good first call resolution rate for contact centers?

A good FCR rate falls between 70% and 79% across most industries. Healthcare and financial services teams typically target 75% to 85% due to the higher stakes of unresolved interactions in those verticals.

2. How many calls should QA teams review each month?

Most contact centers manually review 1% to 5% of calls, which is too small to surface systemic patterns. Automated QA platforms score 100% of calls, giving teams a complete picture rather than a sample-based estimate.

3. Which call center KPIs matter most for healthcare?

FCR, CSAT, average speed of answer, and call abandonment rate are the core four. Compliance adherence (HIPAA disclosures, identity verification) is equally critical but often undertracked because manual QA programs cannot cover enough volume.

4. How do call center KPI benchmarks differ between sales and support?

Support benchmarks prioritize resolution (FCR, repeat contact rate, CSAT). Sales benchmarks prioritize conversion (lead-to-opportunity rate, close rate, revenue per call). AHT targets are also different: support teams optimize for efficiency while sales teams optimize for outcome.

5. Should I benchmark against industry averages or my own historical data?

Both, but weigh your own trajectory more heavily. Industry averages provide directional context, but your quarter-over-quarter trend reveals whether your operational changes are working. A team improving from 65% to 75% FCR is in a stronger position than a team flat at 76%.

Analyze & Evaluate Calls. At Scale.