A training report that actually gets read does three things: it summarizes what was measured, shows where training worked and where it did not, and ends with a specific recommendation. Most training reports do only the first of these. This guide covers how to write a training session feedback report that earns attention from decision-makers and drives changes in your next program.

Why Most Training Reports Don't Drive Action

The gap between a training report and an action plan is usually caused by one of two problems: the report describes activity (who attended, what was covered) rather than outcomes (what changed, what didn't), or the recommendations are too vague to execute ("consider additional training" is not a recommendation).

According to a Brandon Hall Group report on learning measurement, fewer than 30% of L&D teams routinely measure behavior change after training, the level that predicts whether training produced business outcomes. Most programs stop at satisfaction scores.

A well-structured training session report bridges that gap by organizing feedback data around outcomes, not activities.

How to write a report of a training program?

A training program report follows five sections: an executive summary (2-3 sentences covering what was trained, who attended, and the primary finding), an attendance and completion summary, a feedback analysis section with scores and specific comments organized by theme, a performance data section comparing pre- and post-training metrics where available, and a recommendations section with at least one specific action tied to the data. Each section should be written for a different reader: the executive summary for a VP, the feedback analysis for the training team, the performance data for HR.

How to Write a Training Session Feedback Report

Step 1: Collect the right inputs before you write

A training report requires three inputs: attendance data (who attended, role, department, completion status), participant feedback scores (satisfaction, relevance, trainer effectiveness, likelihood to apply), and post-training performance data if available. Without all three, you can describe the event but cannot evaluate it.

Post-training performance data is the hardest to get but the most valuable. For contact center and sales training, Insight7 captures pre- and post-training call scores automatically, so the report can show whether QA criteria scores improved in the weeks after training rather than relying only on participant self-assessment.

Step 2: Write the executive summary first

The executive summary is the most-read section of any training report. Write it last in terms of drafting order, but format it as the first section. It should answer: what did the training cover, who completed it, and what is the primary finding? Keep it to 2-3 sentences.

Example: "Sales onboarding training delivered to 12 new reps in Q1 2026. Completion rate was 100%. Post-training call scores on objection handling improved by an average of 14 points in the six weeks following training, though two reps remain below the coaching threshold and have been assigned follow-up sessions."

Step 3: Organize feedback by theme, not by question

Most training reports present feedback question by question ("average score for trainer effectiveness: 4.2/5"). This format is accurate but not useful. Instead, organize feedback into themes: what participants found most useful, what they found least applicable to their role, and what they requested in future sessions.

Insight7's thematic analysis capability can process written feedback responses and extract cross-participant themes with frequency counts. Rather than reading 50 individual comment fields, the training coordinator sees "8 of 12 participants mentioned scenario realism as a strength; 6 mentioned the pace was too fast for complex topics."

Step 4: Include a performance data section

If your training program connects to measurable performance metrics, include a before-and-after comparison. This is the section that convinces decision-makers that training was worth the investment.

For contact center and sales teams, relevant metrics include QA scores on specific criteria covered in training, handle time changes, customer satisfaction on calls immediately after training completion, and first-call resolution rates. Present these as a simple table with the metric, pre-training baseline, and post-training result.

Step 5: Write actionable recommendations

Each recommendation should name a specific problem, cite the data that revealed it, and propose a specific action. "Two of twelve participants scored below 60 on post-training call evaluations for objection handling. Recommend assigning targeted role-play sessions on objection reframing before their next call quota period."

According to ATD research on learning evaluation, programs that include specific, data-backed recommendations in training reports are significantly more likely to be implemented than those with general conclusions.

How to write a summary of a training programme?

A training programme summary covers four elements: scope (what was trained, to whom, over what period), delivery (format, trainer, completion rate), feedback results (key scores and participant themes, not every question), and outcomes (performance data or behavioral observations linked to training objectives). Keep the summary to one page. The appendix is where full question-by-question data lives.

Using AI to Generate Training Reports at Scale

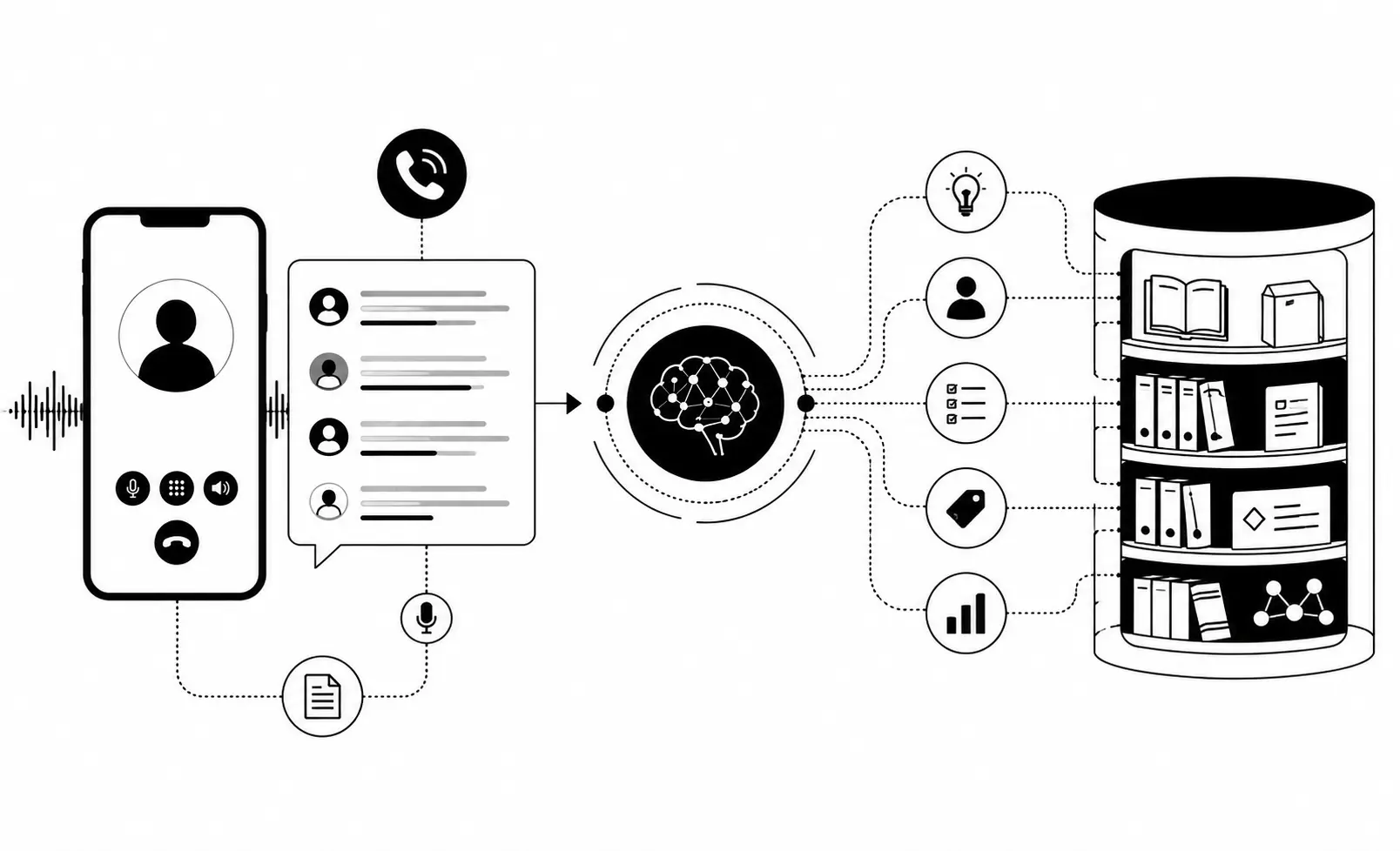

Manual report writing from spreadsheet exports is time-consuming and inconsistent across programs. AI platforms can analyze feedback at scale, extract themes from open-ended responses, and compare performance data across cohorts.

Insight7 processes post-training call recordings alongside feedback data, surfacing which training topics transferred to actual call behavior and which did not. The result is a report that shows behavior change, not just completion.

Fresh Prints implemented this workflow to connect QA outcomes directly to training program results: when QA scores changed after a training intervention, the data surfaced automatically rather than requiring a manual analysis run.

If/Then Decision Framework

If your training reports are read but don't drive changes, then the problem is in the recommendations section. Write recommendations that name a specific person, metric, and action rather than general conclusions.

If you only have satisfaction scores and no performance data, then add at least one post-training metric to your next program: QA score changes, assessment results, or 30-day behavior observation data.

If you are reporting across multiple training programs and cohorts, then standardize on a consistent format and let AI thematic analysis handle the feedback summarization so reports are comparable.

If decision-makers read the executive summary only, then write the primary finding into the first sentence, not the last paragraph.

FAQ

How to write a report of a training program?

Structure the report in five sections: executive summary (2-3 sentences, primary finding first), attendance and completion data, feedback analysis organized by theme not question, performance data comparing pre- and post-training metrics, and specific actionable recommendations with data backing each one. Write the executive summary last but place it first.

How to write feedback on a training program?

Training feedback reports should separate participant sentiment (did they like the training?) from training effectiveness (did behavior change?). Present satisfaction scores with the themes from open-ended comments, then follow with any performance data showing whether the skills applied. Decision-makers need both, but they are not the same data.

See how Insight7's training analytics platform captures performance data before and after training programs so your reports show behavior change, not just completion rates.