Your QA team scores 40 calls a week. The scores go into a spreadsheet. The coaching conversation, when it happens, sounds like “you need to work on empathy” or “good job this week.” Nothing changes. Scores stay flat. Reps tune out the feedback because it is too vague to act on and too disconnected from the specific moments on the call that mattered.

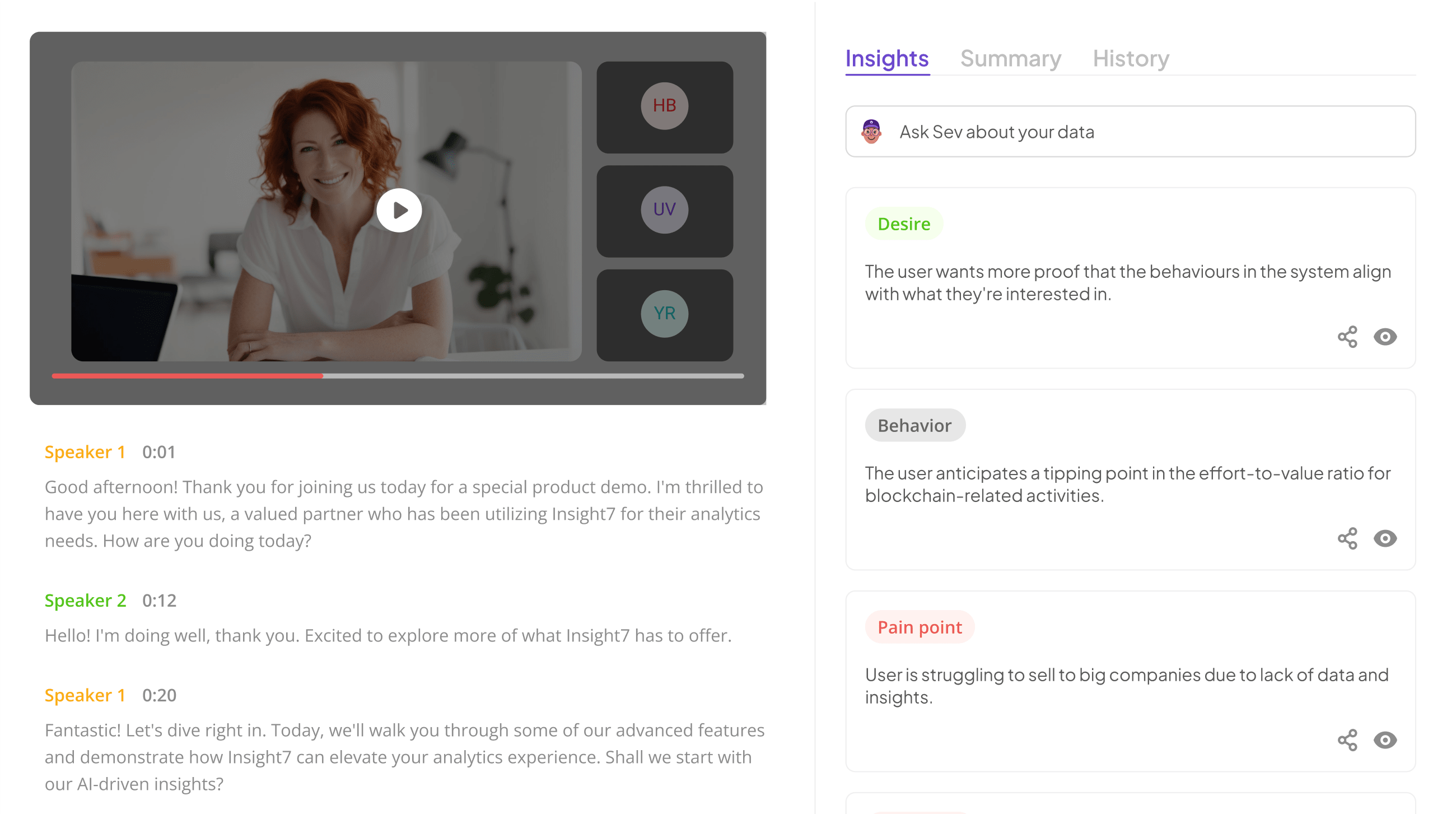

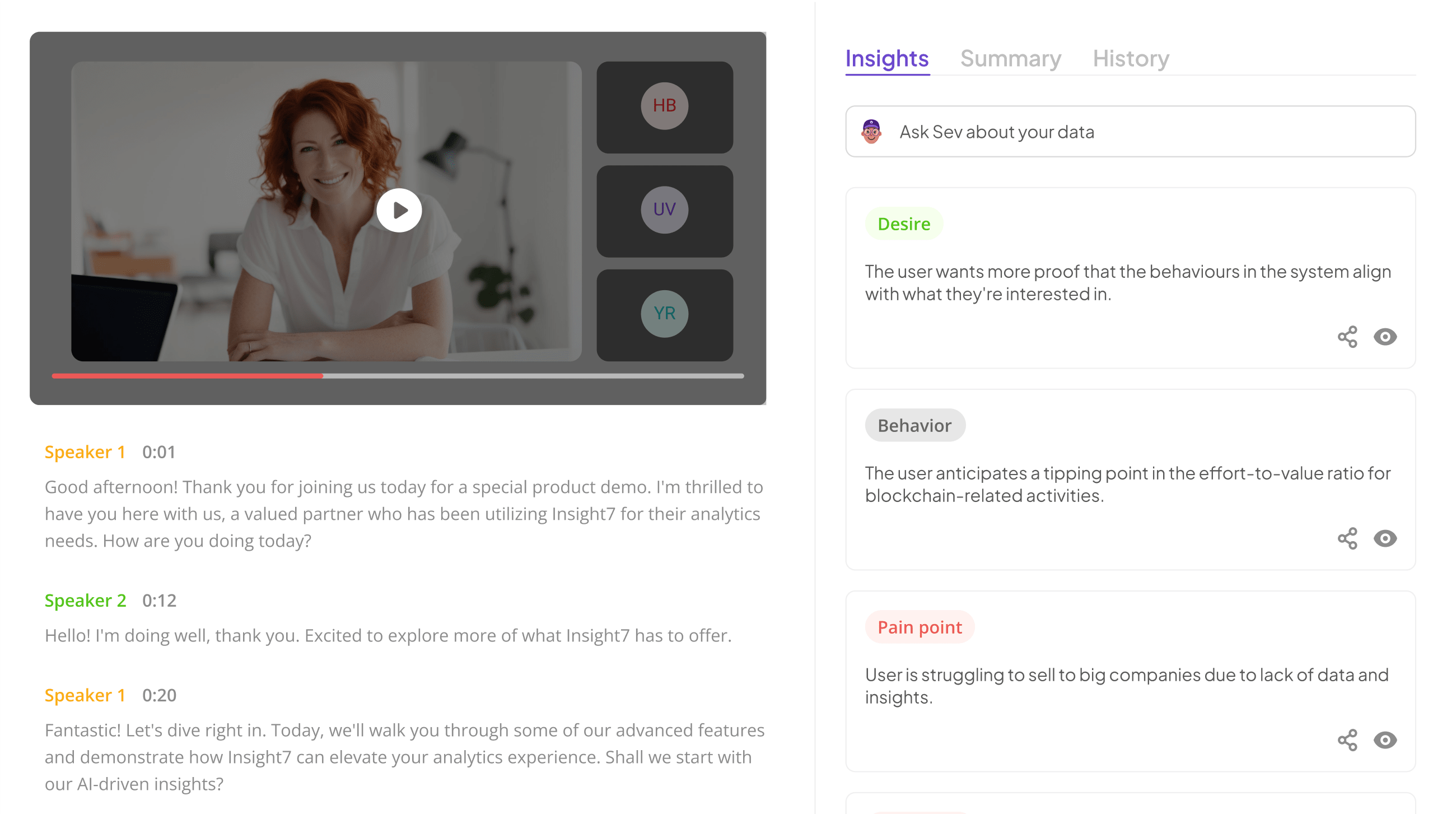

The problem is not that QA feedback exists. The problem is that most quality assurance feedback examples in call centers are written at the wrong altitude. They describe categories (“needs improvement on closing”) instead of specific behaviors (“on the Johnson call at 4:12, the customer asked about cancellation and you moved to retention script before acknowledging their frustration”). Insight7’s automated QA platform scores 100% of calls against custom behavioral criteria and links every score to the exact call moment that produced it, giving QA managers evidence-based feedback that reps can hear, understand, and act on in their next call.

Here are concrete quality assurance feedback examples across five categories, written the way effective QA managers actually deliver them.

Positive Feedback That Reinforces Specific Behaviors

Generic praise (“great call!”) feels good but teaches nothing. Effective positive QA feedback names the behavior, names the moment, and connects it to the outcome.

Example 1: Empathy acknowledgment before problem-solving

“On your 10:15 call with the Meridian account, the customer opened with frustration about a billing error. Before jumping to the fix, you said, ‘I understand how frustrating that must be, especially when you are managing a tight budget cycle.’ That acknowledgment shifted the customer’s tone immediately. Your resolution time on that call was 4:20, which is below your average, because the customer cooperated once they felt heard. Keep leading with acknowledgment before resolution on escalated calls.”

Example 2: Proactive next-step commitment

“On three of your five demo calls this week, you closed by confirming the exact next step, the date, and who owns it. On the Thornton call specifically, you said, ‘I will send the proposal by Thursday with the compliance module included, and we will reconvene Friday at 2.’ That level of specificity is why your pipeline velocity is 22% faster than the team average. The two calls where you did not do this both stalled at follow-up.”

These examples work because the rep can connect the praise to a repeatable action. That is what makes positive QA feedback a coaching tool rather than a morale gesture.

Evaluate Performance on Customer Calls for Quality Assurance.

Constructive Feedback That Targets a Behavior, Not a Person

Constructive feedback fails when it sounds like a character judgment (“you are not empathetic enough”) rather than a behavior observation (“on this specific call, you skipped the acknowledgment step”). The structure that works: name the moment, describe what happened, describe what the alternative looks like, and explain the impact.

Example 3: Missed de-escalation opportunity

“On your 2:30 call Tuesday, the customer raised their voice at 1:45 when you quoted the renewal price. Your response was to repeat the price and move to the next agenda item. The customer interrupted you twice after that. An alternative approach: when a customer’s tone shifts to frustration, pause and acknowledge what you just heard before continuing. Something like ‘I hear that the price increase is a concern. Let me walk through what changed and why.’ That gives the customer space to feel heard before you present the justification.”

Example 4: Rushing through compliance language

“On your last five calls, your average disclosure completion time was 8 seconds. The required disclosure has 42 words. At that speed, most customers cannot process what you are saying, which creates both a compliance risk and a trust issue. Slow the disclosure to a conversational pace, roughly 15 to 18 seconds. It does add time, but it reduces the callback rate on customers who later say they did not understand the terms.”

The key is specificity. “Work on your compliance delivery” gives the rep nothing. “Your disclosure speed is 8 seconds and needs to be 15 to 18 seconds” gives them a measurable target.

Compliance-Specific QA Feedback for Regulated Industries

In financial services and healthcare, compliance feedback carries a different weight. A missed disclosure is not a coaching opportunity. It is a regulatory exposure. QA feedback in regulated environments should clearly separate compliance failures (binary: it happened or it did not) from quality improvements (spectrum: could be better).

Example 5: Missing required disclosure

“On the Patterson call, the rate lock disclosure was not delivered. This is a compliance failure, not a quality issue. The disclosure must be delivered on every call where the rate is discussed, regardless of whether the customer asks about it. I have flagged this for compliance review. Going forward, the disclosure trigger is any mention of rate, APR, or monthly payment by either party.”

Example 6: Disclosure delivered but buried

“On the Chen call, you delivered the disclosure at 6:42, after spending four minutes on product benefits. By that point the customer was ready to close and treated the disclosure as an afterthought. Move the disclosure earlier in the conversation, ideally within the first two minutes after the product discussion begins. Delivering it when the customer is still actively engaged improves both comprehension and compliance quality.”

Insight7’s QA scoring for financial services flags both missed disclosures and disclosure timing automatically, classifying them by severity tier so QA managers can focus review on the violations that carry actual regulatory risk rather than triaging every flagged call manually.

Coaching Session Feedback That Connects QA Scores to Development

QA scores are inputs. Coaching sessions are where they become performance changes. The feedback delivered in a coaching session should connect the score to a specific development action.

Example 7: Connecting a pattern to a practice assignment

“Your empathy scores have been below 60% for three consecutive weeks. Looking at the flagged calls, the pattern is consistent: you move to resolution before the customer finishes describing their issue. This week, I am assigning you two roleplay scenarios in Insight7’s skills practice module focused on active listening in escalated situations. Complete them before Friday, and we will review your call scores next Monday to see if the pattern shifts.”

This structure works because it ties a specific score pattern to a specific behavior observation to a specific practice action to a specific follow-up date. The rep knows what the problem is, what they need to do, and when it will be evaluated.

Analyze & Evaluate Calls. At Scale.

Why Most QA Feedback Fails and How to Fix It

QA feedback fails for three predictable reasons. First, it is based on too small a sample. When a QA manager reviews 5 of a rep’s 120 monthly calls, the feedback reflects those 5 calls, not the rep’s actual performance pattern. Scoring 100% of calls means feedback references patterns, not anecdotes.

Second, feedback is delivered too late. A QA review that happens three weeks after the call has lost its connection to the rep’s memory of the interaction. Automated scoring that produces results within hours allows coaching conversations to happen while the call is still fresh.

Third, feedback lacks evidence. “You need to work on empathy” is an opinion. “Your empathy scores averaged 54% this week, driven by three calls where you skipped acknowledgment before moving to resolution” is evidence. Evidence-based feedback removes the defensiveness that blocks behavior change.

If your QA team is delivering feedback based on a handful of manually reviewed calls and your scores are not improving, book a demo with Insight7 to see how automated scoring across every call produces the evidence-linked, behavior-specific feedback that actually moves performance.

Frequently Asked Questions

1. What are good quality assurance feedback examples for call centers?

Good QA feedback names a specific call moment, describes the behavior observed, explains the impact, and provides a clear alternative when improvement is needed. Generic comments like “good job” or “needs improvement” do not qualify because reps cannot connect them to a repeatable action.

2. How often should QA feedback be delivered to call center agents?

Weekly is the minimum effective cadence for formal feedback. Automated QA scoring enables near-daily informal feedback because scores and evidence are available within hours of each call. The faster the feedback loop, the faster behavior changes.

3. How do you give constructive QA feedback without demotivating agents?

Focus on the behavior, not the person. Reference the specific call moment, describe what happened, describe the alternative, and explain the impact. Pairing constructive feedback with specific positive examples from the same period shows the rep that you see the full picture, not just the gaps.

4. What is the difference between compliance feedback and quality feedback?

Compliance feedback addresses binary pass/fail criteria, such as required disclosures that either happened or did not. Quality feedback addresses a spectrum of performance, such as empathy, pacing, or objection handling. Mixing the two in the same conversation creates confusion about severity. Keep them separate.

5. How many calls should QA review per agent per month?

Most contact centers manually review 3 to 5 calls per agent per month, which is too small to surface patterns. Automated QA platforms score every call, providing a complete behavioral profile rather than a snapshot. This allows feedback to reference trends rather than individual outliers.

Analyze & Evaluate Calls. At Scale.