Using AI for Strategic Decision Support in High-Risk Call Centers

-

Bella Williams

- 10 min read

AI-Driven Decision Support is transforming high-risk call centers, ensuring that professionals can make informed choices rapidly. Imagine a scenario where supervisors can swiftly access performance metrics and customer interaction insights without manually sifting through lengthy calls. This approach streamlines training effectiveness assessment and enhances compliance monitoring, allowing teams to respond more effectively to customer needs.

Incorporating AI tools provides call centers with invaluable data analytics capabilities. By analyzing call trends and frequently asked questions, AI delivers insights that directly inform training programs and improve the overall service experience. With such support, organizations can shift their focus from reactive problem-solving to proactive strategy refinement, leading to heightened customer satisfaction and improved operational efficiency.

Analyze & Evaluate Calls. At Scale.

The Role of AI-Driven Decision Support Systems in Modern Call Centers

AI-driven decision support systems play a crucial role in modern call centers by enhancing the efficiency and effectiveness of operations. These systems automate analysis, providing managers with valuable insights from customer interactions. For instance, they can assess call quality based on predefined metrics and offer performance scores for customer service representatives (CSRs). This not only streamlines the evaluation process but also improves training by identifying areas where CSRs may need further development.

Moreover, AI-driven decision support can analyze trends from customer inquiries, enabling teams to adapt their training programs accordingly. By understanding which questions are most frequently asked, call centers can align their instructional content to better equip CSRs. Overall, employing these advanced solutions enhances both operational performance and customer satisfaction, making call centers more responsive to stakeholder needs and better prepared to handle challenges effectively.

Enhancing Risk Management with AI-Driven Decision Support

AI-Driven Decision Support plays a crucial role in enhancing risk management within high-risk call centers. By harnessing data analytics and machine learning, organizations can identify potential threats and mitigate them proactively. AI systems can analyze call patterns, assess agent performance, and detect anomalies, helping managers to make informed decisions quickly.

Moreover, AI-Driven Decision Support enables real-time monitoring and evaluation of call interactions. This capability allows risk managers to pinpoint vulnerabilities in customer service processes and address them before they escalate. Additionally, insights generated from AI analyses can guide training interventions tailored to specific risk areas. By integrating AI into risk management frameworks, call centers not only improve operational efficiency but also enhance customer satisfaction and trust. Overall, this strategic application of AI transforms how call centers approach risk, fostering a culture of continuous improvement and resilience.

Boosting Customer Interaction Quality Through AI-Driven Decision Support

AI-Driven Decision Support plays a crucial role in enhancing the quality of customer interactions in high-risk call centers. By employing advanced algorithms and real-time data analysis, organizations can empower their staff with insights that drive meaningful conversations. This technology facilitates better understanding of customer needs, allowing agents to engage proactively instead of reactively.

Furthermore, AI-driven systems can streamline the process of identifying and addressing customer concerns. They help to prioritize urgent inquiries, thus improving response times and customer satisfaction. With the ability to gather and analyze vast amounts of data, these systems enable agents to make informed decisions quickly. Training staff to utilize AI effectively cultivates a more engaged workforce, ultimately leading to enriched customer experiences. As organizations increasingly integrate AI into their decision-making processes, the quality of customer interactions will significantly improve, fostering loyalty and trust.

Extract insights from interviews, calls, surveys and reviews for insights in minutes

Top AI Tools for Strategic Decision Support in High-Risk Call Centers

High-risk call centers face numerous challenges that demand precise and timely decision-making. To effectively navigate these complexities, organizations can turn to advanced AI tools designed specifically for strategic decision support. These tools offer capabilities that enhance risk assessment, optimize customer interactions, and streamline operations, minimizing the burden on human agents.

Among the top tools in this space are AI-driven platforms like IBM Watson AI, which harnesses natural language processing to analyze customer interactions in real-time. Google Contact Center AI is another valuable resource, providing insights into customer intent and improving service delivery. Tools such as NICE inContact and Genesys AI also play crucial roles, focusing on data analysis and proactive customer engagement strategies. Lastly, Avaya OneCloud AI integrates seamlessly with existing systems to enhance operational efficiency and decision-making accuracy. By employing these state-of-the-art tools, call centers can significantly improve their strategic frameworks and elevate overall performance.

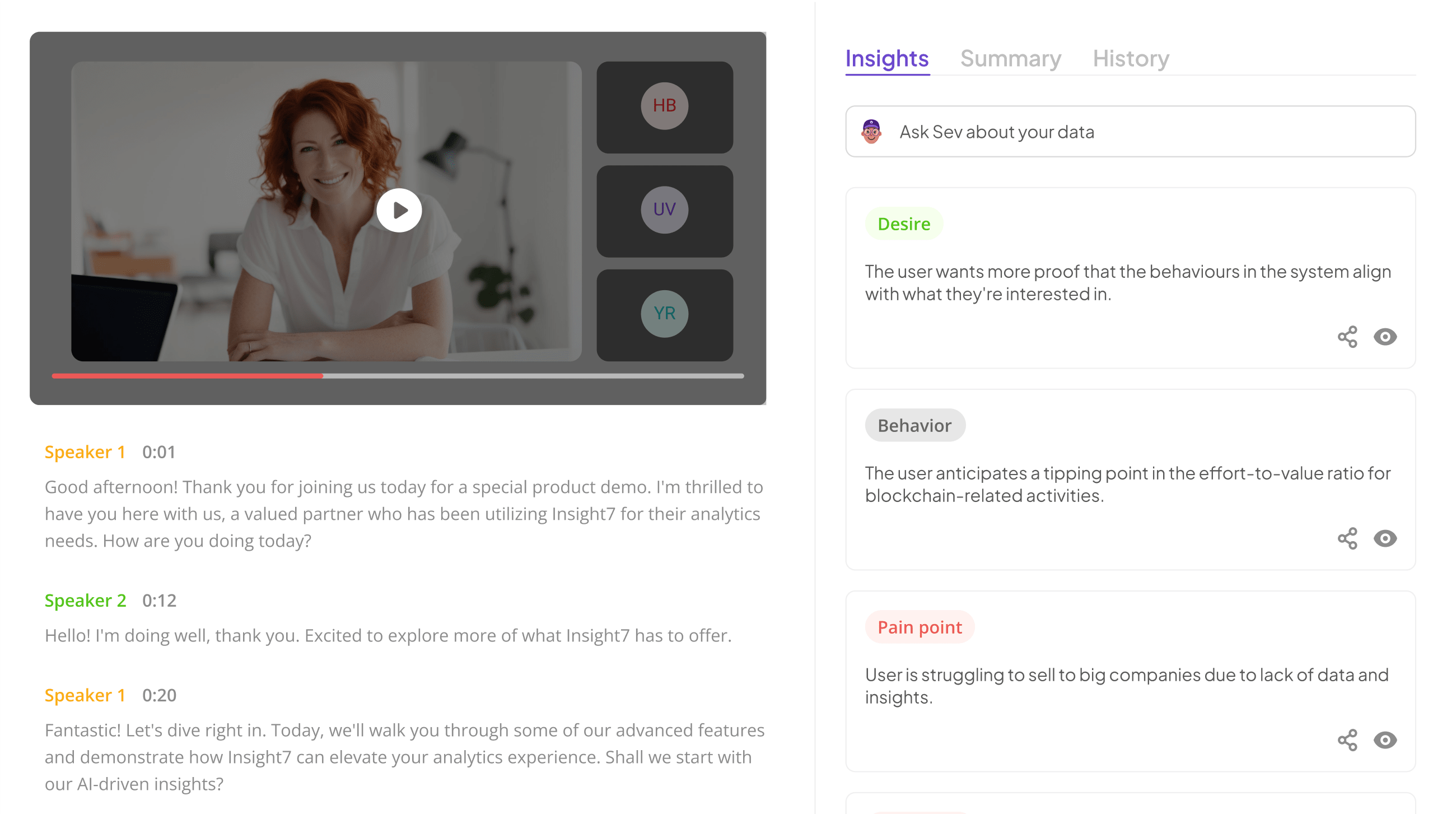

insight7

In the realm of high-risk call centers, the implementation of AI-Driven Decision Support has emerged as a game-changer. Call centers often grapple with extensive amounts of customer interaction data, which can overwhelm manual analysis methods. By utilizing AI techniques, decision-makers can efficiently process insights derived from customer conversations, empowering them to act swiftly and strategically.

Integrating AI tools enables organizations to streamline their workflows and enhance the quality of customer interactions. It facilitates the identification of actionable insights that can directly impact business strategies, making response times more agile and effective. Furthermore, this technology allows for improved collaboration among teams, ensuring that critical customer feedback is centralized and readily accessible. Engaging with AI-Driven Decision Support is essential for call centers aiming to outpace their competition. This reflects the shift towards a more proactive rather than reactive approach in customer service, ultimately leading to better outcomes and customer satisfaction.

💬 Questions about Using AI for Strategic Decision Support in High-Risk Call Centers?

Our team typically responds within minutes

IBM Watson AI

IBM Watson AI plays a pivotal role in the realm of AI-Driven Decision Support. With its advanced natural language processing capabilities, it allows call centers to analyze conversations in real-time effectively. By identifying key metrics such as agent performance and customer sentiment, this technology supports strategic decisions that enhance service quality. Users can access detailed insights that guide responses and optimize operational processes.

Moreover, IBM Watson's machine learning algorithms continuously improve its analytical abilities. As it processes data from numerous interactions, it predicts potential issues and provides actionable recommendations. Call center managers can utilize these insights to implement targeted training for agents, ensuring compliance and enhancing overall performance. By integrating this AI system, high-risk call centers not only streamline workflows but also significantly mitigate risks associated with customer interactions, ultimately leading to a more efficient operation.

Google Contact Center AI

Google Contact Center AI empowers call centers to enhance decision-making and operational efficiency through advanced AI technologies. It analyzes call data and agent performance, providing managers with insights that support AI-Driven Decision Support during high-stakes interactions. By detecting agents' voices and capturing contextual information, this solution can deliver accurate performance evaluations and compliance reports quickly.

Moreover, the integration of AI generates detailed analytics, identifying trends and areas for improvement. Managers receive scorecards highlighting individual agents' strengths and weaknesses, enabling tailored coaching. This targeted approach not only improves performance but also refines overall customer interaction quality. In high-risk environments, timely and informed decisions are paramount, making AI-driven insights essential for operational success. Use of such technology helps organizations navigate complexities, leading to better outcomes for agents and customers alike.

NICE inContact

NICE inContact plays a pivotal role in transforming the operational dynamics of high-risk call centers. By utilizing AI-driven decision support, it enables organizations to analyze customer interactions efficiently, leading to improved service quality. This platform captures valuable data during calls, allowing managers to evaluate agents' adherence to established procedures. As a result, performance metrics can be established and refined, fostering an environment of accountability.

The system enhances decision-making capabilities by leveraging AI tools that highlight crucial trends and issues in customer communications. By automating the screening process, it reduces the manual effort required for quality assurance, thereby allowing staff to focus on resolving complex customer queries. Additionally, the analytics offered guide ongoing training and development, ensuring that agents are equipped to handle high-risk situations effectively. Overall, NICE inContact underscores the importance of AI-driven decision support in promoting operational excellence in call centers.

Genesys AI

Genesys AI plays a pivotal role in enhancing AI-Driven Decision Support within high-risk call centers. By utilizing advanced algorithms and natural language processing, this system transforms call data into actionable insights. Agents can access real-time performance metrics, providing a clear overview of how they engage with customers. Through performance ranking and engagement scores, leaders can make informed decisions about training, compliance, and operational efficiency.

Moreover, Genesys AI enables call centers to maintain high standards of service quality. By automating the analysis of calls, it produces detailed reports that highlight key performance indicators. This immediate feedback empowers agents to improve their interactions continuously. With AI-Driven Decision Support, call center leaders are equipped to manage risks effectively while enhancing their team's capabilities, ultimately leading to improved client satisfaction and operational resilience.

Avaya OneCloud AI

Avaya OneCloud AI transforms traditional call center operations by providing robust AI-Driven Decision Support specifically engineered for high-risk environments. It seamlessly integrates advanced analytics and machine learning capabilities to proactively identify trends and enhance decision-making processes. Leveraging real-time data analysis, the system enables call center supervisors to monitor interactions efficiently. This means that critical compliance issues can be addressed promptly while streamlining quality assurance measures.

Moreover, Avaya OneCloud AI helps prioritize customer interactions by analyzing caller sentiment and interaction history. This allows teams to focus on high-impact conversations, enhancing both customer satisfaction and operational efficiency. By doing so, organizations can optimize their resources meaningfully while mitigating risks associated with high-volume call handling. Ultimately, this AI-driven approach not only empowers decision-makers but also creates a more responsive and resilient call center environment.

Conclusion: The Future of AI-Driven Decision Support in Call Centers

The future of AI-driven decision support in call centers promises transformative advancements that can enhance operational efficiency and elevate customer service. As AI technologies evolve, we can expect systems that not only streamline data analysis but also provide actionable insights in real-time, empowering call center agents with the information they need to make informed decisions. This shift will allow organizations to adapt quickly to changing customer needs and market dynamics.

Moving forward, the integration of AI-driven decision support will play a critical role in shaping strategic initiatives within high-risk environments. By automating routine tasks and analyzing patterns in customer interactions, these systems can significantly reduce response times and improve overall service quality. As call centers embrace these innovations, companies will be better positioned to meet challenges head-on, fostering a proactive approach to customer engagement and satisfaction.

💬 Questions about Using AI for Strategic Decision Support in High-Risk Call Centers?

Our team typically responds within minutes